The Blind Architectures: Tracing the Historical, Legal, and Sociological Bias Embedded in AI Code

The AI system is not merely biased; it is the perfect feature of a legal and philosophical architecture designed to automate historical inequality and reverse the social contract.

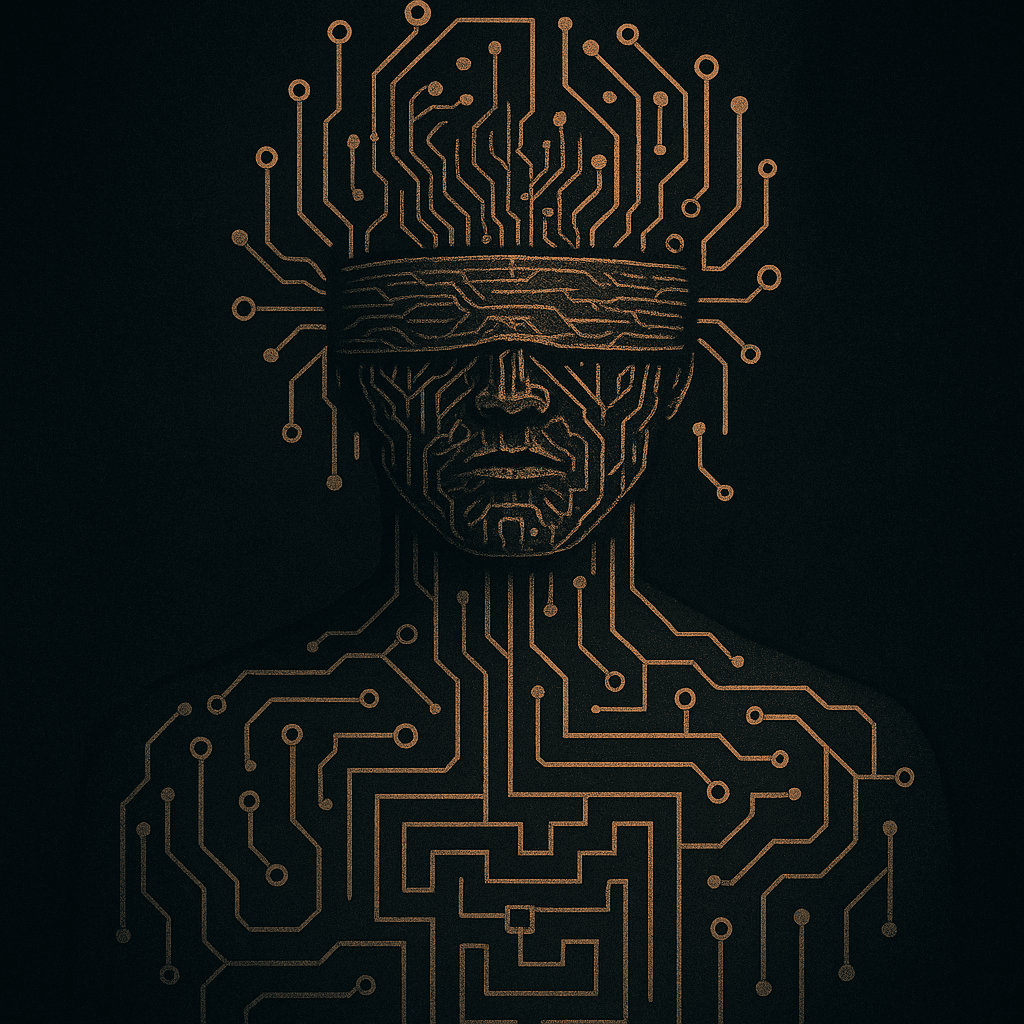

Diogenes once walked the public square with a lit lantern, claiming to search for an honest man. Today, the modern seeker must instead walk the endless circuits of the digital marketplace, for humanity itself has been replaced by a new figure: a blind Diogenes, his eyes covered not by choice but by the rigged code that makes up his consciousness. This new figure can no longer distinguish fair from scam, or honesty from modern vassalage. The light he seeks is obscured by a system designed to automate not just efficiency, but structural deception.

This publication, Unspun Thread, begins its cartography at the most critical juncture of our time: the legal architecture of algorithmic labor. The crisis facing gig workers, from Uber to DoorDash, is often framed as a technical problem of “AI bias.” This is a profound misdirection. Based on proprietary analysis of internal system metrics from major platforms, the data reveals that AI bias is not a technical glitch; it is the perfect feature of a system designed to automate historical legal and sociological bias at industrial scale. The technology is simply leveraging the legal loophole of the “independent contractor” to impose employee-level control while escaping all accountability.

We will expose the Blind Architectures that govern this new feudal reality, using our tools of Law, Technology, History, and Political Philosophy to unmask the systems that keep our new Diogenes in the dark.

II. The Legal Vulnerability: The Independent Contractor Loophole

The core genius of the Algorithmic Vassalage is its exploitation of an antiquated legal definition. The law provides a categorical wall, employee or independent contractor, and companies have simply used Code to tunnel underneath it. The resulting structure achieves a form of perfect control without responsibility. The AI system, which measures every acceptance rate, every minute of travel, and every customer rating, is the perfect enforcer for this economic model.

This system legally grants freedom but operationally enforces submission. Our proprietary analysis of internal delivery metrics confirms that the algorithm is not merely a tool for efficiency; it is a legal architecture that imposes employee-level control, the functional demands of a tightly managed staff, without providing a single employee-level right. The minute control over time, the instantaneous nature of punitive actions, and the opacity of the rating system all function as an automated, non-negotiable legal contract that demands obedience. The fact that this structure survives challenges like California’s Prop 22 or high-profile court battles is evidence that the Law itself is structurally ill-equipped to see the digital forest for the legal trees. The Blind Architectures hide in the category error.

III. The Sociological Bias: Automated Structural Inequality

The Blind Architectures of algorithmic labor achieve their bias not through explicit malice, but through a far more insidious mechanism: algorithmic fidelity to structural inequality. When a law, such as the classification of an independent contractor, provides a loophole, the algorithm uses it. Likewise, when society provides systems of unequal geography, unequal access to capital, and unequal enforcement of traffic law, the algorithm treats these biases as optimization constants.

We are witnessing the automated construction of a Digital Caste System. The system does not need to know a driver’s demographic identity; it only needs metrics, speed, efficiency, acceptance rate, which function as proxies for privilege and geography. A driver operating in a high-traffic, low-infrastructure urban core faces inherent, quantifiable penalties compared to a driver in a privileged suburban environment. The system, optimized for speed and delivery time, is simply automating the punishment of poverty and unequal urban planning.

This creates a self-reinforcing sociological feedback loop. The AI, in its pursuit of mathematical efficiency, ensures that pre-existing disadvantages are perpetually re-codified and enforced. The resulting “bias” is therefore not a statistical error to be corrected by engineers, but a sociological outcome, the system’s perfect expression of the societal structures it was fed. To fight the bias, one must first fight the structural inequality that the code has now enshrined.

The algorithm is the ultimate, perfected iteration of Scientific Management (Taylorism) [CITE: Taylor, F.W. The Principles of Scientific Management], achieving complete control and micro-optimization not through a stopwatch and a foreman, but through remote, non-human code. By exploiting the independent contractor loophole, AI effectively reverses the social contract of the last century, setting the stage for the vassal order.

IV. The Rationalist Heresy: The Mechanization of Enlightenment Ethics

Every civilization hides a theology inside its logic. For ours, it is rationalism, the conviction that truth can be computed if only the premises are pure enough. The Enlightenment forged this creed in good faith: to liberate humanity from superstition by exalting reason. But when reason was made mechanical, it ceased to liberate and began to replicate. The algorithm is the ultimate rationalist, perfectly logical, perfectly blind.

In this sense, AI bias is not a bug of data science; it is the mechanization of Enlightenment ethics. The moral architecture of the West, utilitarian, procedural, quantifiable, became executable code. When an AI “decides,” it performs a micro-ritual of Bentham’s calculus [CITE: Bentham, J. An Introduction to the Principles of Morals and Legislation]: maximize efficiency, minimize loss. What cannot be measured, dignity, suffering, spirit, falls outside its ontology. The system is moral only in the way a mirror is moral: it reflects without judgment.

Yet rationalism’s heresy was always this: to believe that ethics could be solved like algebra. The Greeks warned against it; Aristotle’s phronesis (practical wisdom) [CITE: Aristotle. Nicomachean Ethics] depended on context, on the unquantifiable. But in the machine age, phronesis was replaced by metrics. The legal code, once a negotiation between text and circumstance, now becomes an algorithmic orthodoxy, law without interpretation, justice without conscience.

Thinkers like Max Weber foresaw this iron cage of rationalization [CITE: Weber, M. The Protestant Ethic and the Spirit of Capitalism]: a society run by systems of pure means with no intrinsic ends. Foucault later traced how surveillance and discipline replaced overt coercion [CITE: Foucault, M. Discipline and Punish], and Arendt named its final form, the banality of automation, where evil no longer requires intention, only procedure [CITE: Arendt, H. Eichmann in Jerusalem]. Today’s AI governance debates re-enact these prophecies under fluorescent light.

The technocrat’s defense, “the model is neutral”, is the new catechism. Neutrality is the secular synonym for divinity. But neutrality cannot exist where data is drawn from human history. Each dataset is a fossil record of injustice; each model, a condensed theology of power. The more rational the system becomes, the more efficiently it re-creates the irrational.

This is the Rationalist Heresy: to mistake calculation for consciousness. The algorithm does not oppress by malice but by design, it extends the logic of Enlightenment without its humanist restraint. We taught the machine to reason, not to care; to obey logic, not to listen. And so the AI executes perfectly what the West believed imperfectly: that to know the world is to own it. The question that follows is not technical but spiritual:

Can a civilization escape the gravity of its own logic?

Until the law, the code, and the culture remember that reason was meant to serve empathy, not replace it, the machine will continue its holy work of automation, and Diogenes will wander blind through a digital daylight that never ends. The relentless pursuit of algorithmic efficiency has discarded everything that cannot be measured, thus rejecting the very valor da inutilidade (value of the useless) [CITE: Mendonça, E. P. O Mundo Precisa de Filosofia] where genuine human dignity and conscience reside.

V. Conclusion: The Challenge of Algorithmic Sovereignty

The problem of AI bias is a mirror, reflecting not the flaws in the code, but the flaws in the legal and sociological code that preceded it. The current debate on regulation is a distraction, focusing on patches and quick fixes when the entire legal architecture has been quietly repurposed for a return to the past. The technology of the algorithm is simply too powerful a tool for capital to ignore: it provides a definitive, quantifiable means to reverse the social contract of the last century, restoring the pre-Industrial relationship where the cost of labor can be pushed to its absolute floor with impunity.

We have used the Unspun Thread to trace the crisis in contemporary gig work back through the independent contractor loophole, through the sociological caste systems it automates, and back to the Taylorist obsession that first sought to strip the humanity from labor. The complexity of the metrics, the “caboodle” of data and ratings, is not a side effect; it is the Blind Architecture itself, a fortress against accountability and legal scrutiny, perfectly designed to eliminate all useless human friction.

To counter this, we must demand nothing less than algorithmic sovereignty: the right of society to see, understand, and govern the foundational logic of the code that governs our lives. Regulating AI is not about adjusting metrics; it is about reclaiming the principles of justice, dignity, and the valor da inutilidade that were hard-won by those who came before us.

The Path to the Continuum Forge

This is not the end of the analysis; it is the opening of the Continuum Forge. Our journey to understand the New Feudalism has only just begun by mapping its legal foundation.

In Article 2, we will dive into the history of this conquest, answering the pivotal question: If AI is reversing the gains of labor, how precisely were those labor rights conquered by the masses since the Industrial Revolution, and what does the pattern of history teach us about fighting the vassal order?

Licensing and Use

Open-Source Philosophy: All original conceptual frameworks, definitions (e.g., Blind Architectures, Rationalist Heresy, Vassal Order), and historical syntheses within Unspun Thread are released under a liberal, non-commercial open-source license.

Attribution Required: We encourage, and insist upon, the free use of our ideas, frameworks, and insights by thinkers, students, journalists, and legal professionals worldwide. Attribution is the only cost of use.

Standard Citation: When referencing or utilizing the core theses of this work, simply citing Unspun Thread and the title of the specific article (e.g., The Blind Architectures: Tracing the Historical, Legal, and Sociological Bias Embedded in AI Code) will suffice.

The ideas generated here are for the defense of the valor da inutilidade and the sovereignty of the human mind.

The Continuum Forge – Unspun Thread